Advanced Paradigms in Equity Analysis: Integrating Big Data Architectures and Sentiment Intelligence1. Strategic Introduction to Modern Stock ForecastingIn the contemporary financial landscape, equity forecasting is no longer a peripheral activity but a core component of resilient business planning and risk mitigation. As global markets transition toward total digitization, the strategic advantage has shifted from traditional manual oversight to computational models capable of digesting the explosion of public domain intelligence. Modern quantitative strategies must now synthesize diverse data streams—ranging from Wikipedia edit volumes and real-time news feeds to social forum discourse—to capture the nuances of market sentiment. This paper delineates an integrated architectural framework utilizing Hadoop MapReduce for volatility assessment, PySpark for predictive price modeling, and a domain-specific dictionary-based approach for sentiment intelligence. Establishing this multi-layered analytical pipeline requires a sophisticated technical infrastructure designed to manage the extreme velocity and volume of modern financial datasets.2. Computational Foundations: Big Data Frameworks in FinanceTraditional monolithic data processing architectures frequently reach a “performance ceiling” when confronted with the high-frequency telemetry of modern equity markets. In an era where sub-millisecond trading and complex fraud detection are mandatory, distributed computing provides the horizontal scalability required to maintain operational alpha. By distributing computational workloads across a cluster of commodity hardware, firms can achieve the fault tolerance and throughput necessary for real-time market surveillance.The following table contrasts the two primary distributed engines utilized in our architectural stack:Feature Hadoop MapReduce Apache Spark (PySpark)Processing Mode Primarily Batch Processing Supports Batch and Real-time ProcessingSpeed/Efficiency Disk-based; high latency for iterative tasks In-memory; up to 100x faster than MapReduceEcosystem Components JobTracker (Master) and TaskTracker (Slave) Spark Core, SQL, MLlib, DataFrames, and StreamingWithin this ecosystem, PySpark’s MLlib and DataFrames are essential for abstracting the underlying distributed complexity. These high-level APIs simplify the implementation of robust machine learning pipelines, enabling the seamless execution of complex classification and regression tasks across massive partitions. This computational engine provides the necessary horsepower for the market behavior analysis that follows.3. Theoretical Market Mechanics and Volatility TheoryEquity markets are defined by non-linear dynamics and inherent uncertainty, making the strategic quantification of price fluctuations a prerequisite for capital preservation. Market behavior is rarely a steady-state phenomenon, and price stability is frequently interrupted by structural shifts.Stock Volatility serves as the primary metric for assessing systemic risk and relative performance, typically quantified through the variance of returns:* High Volatility: Characterized by significant price swings over compressed timelines, indicating elevated risk profiles often associated with speculative “market aggressors.”* Low Volatility: Characterized by tighter price ranges and steady trajectory, serving as a “benchmark leader” for conservative asset allocation.This volatility frequently challenges the Efficient Market Hypothesis (EMH), which posits that asset prices always reflect all available information. Historical anomalies, such as the 1987 Dow Jones collapse—where the index plummeted 22.6% in a single day—demonstrate that share prices often fluctuate independently of “new information.” Because market movements can be driven by psychological contagion or random noise rather than fundamentals, advanced distributed tools like MapReduce are required to identify hidden patterns within these fluctuations.4. Methodology: Multi-Phase Volatility Analysis via MapReduceTo optimize risk-adjusted returns, our methodology focuses on isolating the extremes of the market—identifying the “Top 10” stocks by volatility within a 1,000-stock NYSE dataset. This is achieved through a rigorous Three-Phase MapReduce Process:* Phase 1 (Data Mapping & Rate of Return): The initial mapper extracts Date and Adj Close (Adjusted Close) values. The reduction phase then aggregates the mapped pairs to compute the monthly geometric returns for each ticker.* Phase 2 (Volatility Calculation): The second phase processes the monthly returns to determine variance. The reducer applies the standard deviation formula—Math.sqrt(calc/(noOfMonths – 1))—to output the finalized volatility coefficient for each stock.* Phase 3 (Sorting & Final Extraction): The final phase performs a global sort of the volatility coefficients. A Cleanup method is executed post-task to programmatically isolate and output the 10 highest and 10 lowest volatility entities.Our analysis identified the following market leaders and aggressors based on 1000 NYSE stock entries:Benchmark Leaders: Low Volatility1. AGZD (0.0039)2. AGND (0.0107)3. AGNCB (0.0167)4. ALLB (0.0218)5. ACNB (0.0285)6. ACWI (0.0331)7. ACGL (0.0342)8. ACMX (0.0386)9. ADRA (0.0406)10. AAIT (0.0439)Market Aggressors: High Volatility1. ABIO (0.2468)2. AERI (0.2569)3. AGRX (0.2732)4. ALDX (0.3073)5. ALIM (0.3308)6. ADMP (0.3318)7. ACHV (0.3804)8. ALDR (0.3986)9. AFMD (0.4191)10. ADXS (0.4411)While volatility metrics quantify price movement, sentiment intelligence is required to capture the exogenous drivers behind these swings.5. Sentiment Intelligence: Dictionary-Based and Deep Learning AnalysisThe “Non-Linearities” of financial data render traditional linear models insufficient for modern sentiment gauging. Our framework utilizes a dual approach: a high-precision dictionary-based model and an exploratory Long Short-Term Memory (LSTM) Deep Learning architecture.Sentiment Pre-Processing Workflow:1. News Aggregation: Harvesting data from the New York Times, Reuters, and Yahoo Finance.2. Tokenization: Segmenting unstructured text into word-level vectors.3. Noise Removal: Stripping punctuation, finance-specific stop words, and geographical metadata.4. Stemming: Reducing words to their origin (e.g., “development” to “develop”) to consolidate feature space.The LSTM architecture was selected for its ability to resolve the “vanishing gradient” problem through Gated Cells (Input, Forget, and Output gates), allowing the network to maintain long-term dependencies in news sequences. However, our benchmarks reveal that a dictionary-based approach, leveraging a domain-specific financial lexicon, achieved a superior 70.59% accuracy in predicting short-term trend movements. In contrast, the LSTM model demonstrated significant volatility in its learning curve for this specific dataset.Analysis of the LSTM “Accuracy vs. Epoch” metrics indicates a significant divergence between training and validation sets. As epochs increased, training accuracy climbed toward 64%, while validation accuracy stagnated near 50-52%. This indicates a high risk of Overfitting, where the model memorizes noise rather than generalizes patterns, necessitating a preference for the more robust dictionary-based sentiment coefficients in current production environments.6. Predictive Modeling: PySpark MLlib and Decision Tree RegressorsThe final predictive layer integrates historical price action with a diverse array of macroeconomic indicators. We consolidated an 11-column dataset including Interest Rates, Exchange Rates, VIX, Gold, Oil, TEDSpread, and EFFR (Effective Federal Funds Rate).Feature Engineering and Vectorization To prepare this multi-source data for the MLlib API, we implemented a sophisticated pipeline:* StringIndexer & OneHotEncoding: Translating categorical features into high-dimensional binary representations.* VectorAssembler: This step is critical, as it consolidates all independent variables into the single “feature vector” required by the Spark MLlib API.Model Performance The Decision Tree Regressor was deployed as our primary predictive engine. It achieved an accuracy of 90%, significantly outperforming Support Vector Machines (SVM) and Logistic Regression by effectively capturing the non-linear relationships between macro-features and price targets.Model Evaluation Metrics | Metric | Result | Strategic Interpretation | | :— | :— | :— | | Mean Absolute Error (MAE) | 1.024 | Reflects high precision in continuous price variance prediction | | R-square Ratio | 70% | Exceeds the 60% industry benchmark for predictive model viability |Correlation analysis of these results confirms that positive news coefficients are directly linked to price appreciation, providing a statistically sound foundation for automated trading signals.7. Limitations, Future Horizons, and Investment ConclusionDespite the sophistication of distributed algorithmic frameworks, certain systemic boundaries remain.Critical Limitations* Exogenous Shocks: Algorithmic models struggle to account for “Black Swan” events or unpredictable acts of nature that override historical patterns.* Technical Risk: Over-reliance on technical analysis without integrated risk management can lead to catastrophic losses during structural market shifts.Future Horizons The roadmap for this architecture involves a transition to Real-time Trading Models utilizing Live Streaming Data. By migrating from batch-processed news to real-time event streams, we expect to further minimize the latency between sentiment shifts and executive action, boosting overall model alpha.Investment Recommendation Based on our integrated analysis of Apple (AAPL), the convergence of strongly positive sentiment scores and high-accuracy predictive modeling (R-square of 70%) justifies a “Positive” outlook. AAPL remains a high-conviction candidate for near-term profit-taking.The synthesis of Hadoop MapReduce, PySpark, and domain-specific sentiment intelligence represents the new standard for quantitative equity analysis. While no model can eliminate market risk, this multi-layered distributed architecture provides a statistically superior advantage for navigating the complexities of the global financial system.

Advanced Paradigms in Equity Analysis: Integrating Big Data Architectures and Sentiment Intelligence

1. Strategic Introduction to Modern Stock Forecasting

In the contemporary financial landscape, equity forecasting is no longer a peripheral activity but a core component of resilient business planning and risk mitigation. As global markets transition toward total digitization, the strategic advantage has shifted from traditional manual oversight to computational models capable of digesting the explosion of public domain intelligence. Modern quantitative strategies must now synthesize diverse data streams—ranging from Wikipedia edit volumes and real-time news feeds to social forum discourse—to capture the nuances of market sentiment. This paper delineates an integrated architectural framework utilizing Hadoop MapReduce for volatility assessment, PySpark for predictive price modeling, and a domain-specific dictionary-based approach for sentiment intelligence. Establishing this multi-layered analytical pipeline requires a sophisticated technical infrastructure designed to manage the extreme velocity and volume of modern financial datasets.

2. Computational Foundations: Big Data Frameworks in Finance

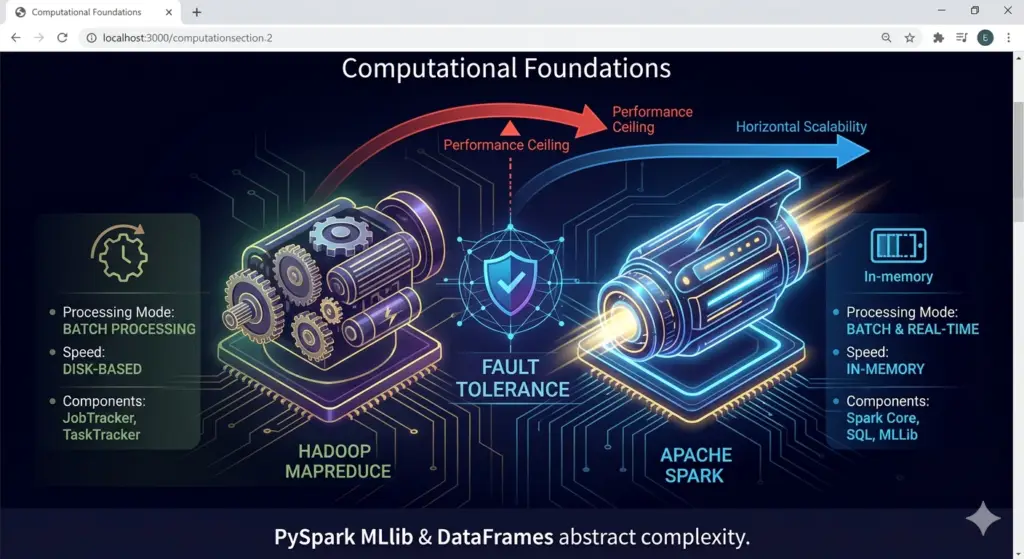

Traditional monolithic data processing architectures frequently reach a “performance ceiling” when confronted with the high-frequency telemetry of modern equity markets. In an era where sub-millisecond trading and complex fraud detection are mandatory, distributed computing provides the horizontal scalability required to maintain operational alpha. By distributing computational workloads across a cluster of commodity hardware, firms can achieve the fault tolerance and throughput necessary for real-time market surveillance.

The following table contrasts the two primary distributed engines utilized in our architectural stack:

| Feature | Hadoop MapReduce | Apache Spark (PySpark) |

| Processing Mode | Primarily Batch Processing | Supports Batch and Real-time Processing |

| Speed/Efficiency | Disk-based; high latency for iterative tasks | In-memory; up to 100x faster than MapReduce |

| Ecosystem Components | JobTracker (Master) and TaskTracker (Slave) | Spark Core, SQL, MLlib, DataFrames, and Streaming |

img { max-width: 100%; height: auto; display: block; } .my-photo { width: 300px; /*

Within this ecosystem, PySpark’s MLlib and DataFrames are essential for abstracting the underlying distributed complexity. These high-level APIs simplify the implementation of robust machine learning pipelines, enabling the seamless execution of complex classification and regression tasks across massive partitions. This computational engine provides the necessary horsepower for the market behavior analysis that follows.

3. Theoretical Market Mechanics and Volatility Theory

Equity markets are defined by non-linear dynamics and inherent uncertainty, making the strategic quantification of price fluctuations a prerequisite for capital preservation. Market behavior is rarely a steady-state phenomenon, and price stability is frequently interrupted by structural shifts.

Stock Volatility serves as the primary metric for assessing systemic risk and relative performance, typically quantified through the variance of returns:

- High Volatility: Characterized by significant price swings over compressed timelines, indicating elevated risk profiles often associated with speculative “market aggressors.”

- Low Volatility: Characterized by tighter price ranges and steady trajectory, serving as a “benchmark leader” for conservative asset allocation.

This volatility frequently challenges the Efficient Market Hypothesis (EMH), which posits that asset prices always reflect all available information. Historical anomalies, such as the 1987 Dow Jones collapse—where the index plummeted 22.6% in a single day—demonstrate that share prices often fluctuate independently of “new information.” Because market movements can be driven by psychological contagion or random noise rather than fundamentals, advanced distributed tools like MapReduce are required to identify hidden patterns within these fluctuations.

4. Methodology: Multi-Phase Volatility Analysis via MapReduce

To optimize risk-adjusted returns, our methodology focuses on isolating the extremes of the market—identifying the “Top 10” stocks by volatility within a 1,000-stock NYSE dataset. This is achieved through a rigorous Three-Phase MapReduce Process:

- Phase 1 (Data Mapping & Rate of Return): The initial mapper extracts

DateandAdj Close(Adjusted Close) values. The reduction phase then aggregates the mapped pairs to compute the monthly geometric returns for each ticker. - Phase 2 (Volatility Calculation): The second phase processes the monthly returns to determine variance. The reducer applies the standard deviation formula—

Math.sqrt(calc/(noOfMonths - 1))—to output the finalized volatility coefficient for each stock. - Phase 3 (Sorting & Final Extraction): The final phase performs a global sort of the volatility coefficients. A

Cleanupmethod is executed post-task to programmatically isolate and output the 10 highest and 10 lowest volatility entities.

Our analysis identified the following market leaders and aggressors based on 1000 NYSE stock entries:

Benchmark Leaders: Low Volatility

- AGZD (0.0039)

- AGND (0.0107)

- AGNCB (0.0167)

- ALLB (0.0218)

- ACNB (0.0285)

- ACWI (0.0331)

- ACGL (0.0342)

- ACMX (0.0386)

- ADRA (0.0406)

- AAIT (0.0439)

Market Aggressors: High Volatility

- ABIO (0.2468)

- AERI (0.2569)

- AGRX (0.2732)

- ALDX (0.3073)

- ALIM (0.3308)

- ADMP (0.3318)

- ACHV (0.3804)

- ALDR (0.3986)

- AFMD (0.4191)

- ADXS (0.4411)

While volatility metrics quantify price movement, sentiment intelligence is required to capture the exogenous drivers behind these swings.

5. Sentiment Intelligence: Dictionary-Based and Deep Learning Analysis

The “Non-Linearities” of financial data render traditional linear models insufficient for modern sentiment gauging. Our framework utilizes a dual approach: a high-precision dictionary-based model and an exploratory Long Short-Term Memory (LSTM) Deep Learning architecture.

Sentiment Pre-Processing Workflow:

- News Aggregation: Harvesting data from the New York Times, Reuters, and Yahoo Finance.

- Tokenization: Segmenting unstructured text into word-level vectors.

- Noise Removal: Stripping punctuation, finance-specific stop words, and geographical metadata.

- Stemming: Reducing words to their origin (e.g., “development” to “develop”) to consolidate feature space.

The LSTM architecture was selected for its ability to resolve the “vanishing gradient” problem through Gated Cells (Input, Forget, and Output gates), allowing the network to maintain long-term dependencies in news sequences. However, our benchmarks reveal that a dictionary-based approach, leveraging a domain-specific financial lexicon, achieved a superior 70.59% accuracy in predicting short-term trend movements. In contrast, the LSTM model demonstrated significant volatility in its learning curve for this specific dataset.

Analysis of the LSTM “Accuracy vs. Epoch” metrics indicates a significant divergence between training and validation sets. As epochs increased, training accuracy climbed toward 64%, while validation accuracy stagnated near 50-52%. This indicates a high risk of Overfitting, where the model memorizes noise rather than generalizes patterns, necessitating a preference for the more robust dictionary-based sentiment coefficients in current production environments.

6. Predictive Modeling: PySpark MLlib and Decision Tree Regressors

The final predictive layer integrates historical price action with a diverse array of macroeconomic indicators. We consolidated an 11-column dataset including Interest Rates, Exchange Rates, VIX, Gold, Oil, TEDSpread, and EFFR (Effective Federal Funds Rate).

Feature Engineering and Vectorization To prepare this multi-source data for the MLlib API, we implemented a sophisticated pipeline:

- StringIndexer & OneHotEncoding: Translating categorical features into high-dimensional binary representations.

- VectorAssembler: This step is critical, as it consolidates all independent variables into the single “feature vector” required by the Spark MLlib API.

Model Performance The Decision Tree Regressor was deployed as our primary predictive engine. It achieved an accuracy of 90%, significantly outperforming Support Vector Machines (SVM) and Logistic Regression by effectively capturing the non-linear relationships between macro-features and price targets.

Model Evaluation Metrics | Metric | Result | Strategic Interpretation | | :— | :— | :— | | Mean Absolute Error (MAE) | 1.024 | Reflects high precision in continuous price variance prediction | | R-square Ratio | 70% | Exceeds the 60% industry benchmark for predictive model viability |

Correlation analysis of these results confirms that positive news coefficients are directly linked to price appreciation, providing a statistically sound foundation for automated trading signals.

7. Limitations, Future Horizons, and Investment Conclusion

Despite the sophistication of distributed algorithmic frameworks, certain systemic boundaries remain.

Critical Limitations

- Exogenous Shocks: Algorithmic models struggle to account for “Black Swan” events or unpredictable acts of nature that override historical patterns.

- Technical Risk: Over-reliance on technical analysis without integrated risk management can lead to catastrophic losses during structural market shifts.

Future Horizons The roadmap for this architecture involves a transition to Real-time Trading Models utilizing Live Streaming Data. By migrating from batch-processed news to real-time event streams, we expect to further minimize the latency between sentiment shifts and executive action, boosting overall model alpha.

Investment Recommendation Based on our integrated analysis of Apple (AAPL), the convergence of strongly positive sentiment scores and high-accuracy predictive modeling (R-square of 70%) justifies a “Positive” outlook. AAPL remains a high-conviction candidate for near-term profit-taking.

The synthesis of Hadoop MapReduce, PySpark, and domain-specific sentiment intelligence represents the new standard for quantitative equity analysis. While no model can eliminate market risk, this multi-layered distributed architecture provides a statistically superior advantage for navigating the complexities of the global financial system.